In the output logs, we can see that the tests are launched with the -Pparallel-tests parameter. For example this pull request triggered this Jenkins build. The Hadoop PMC (Project Management Committee) team uses Apache Yetus to make a test run after every pull request on GitHub. We decided to look around the Apache Foundation Jenkins to see how the tests for HDFS are run. There are currently more than 700 tests in the hadoop-hdfs-project submodule but five hours is a bit long. Reactor Summary for Apache Hadoop HDFS Project 3.3.0:

The first thing we will do is to git clone the Apache Hadoop repository:

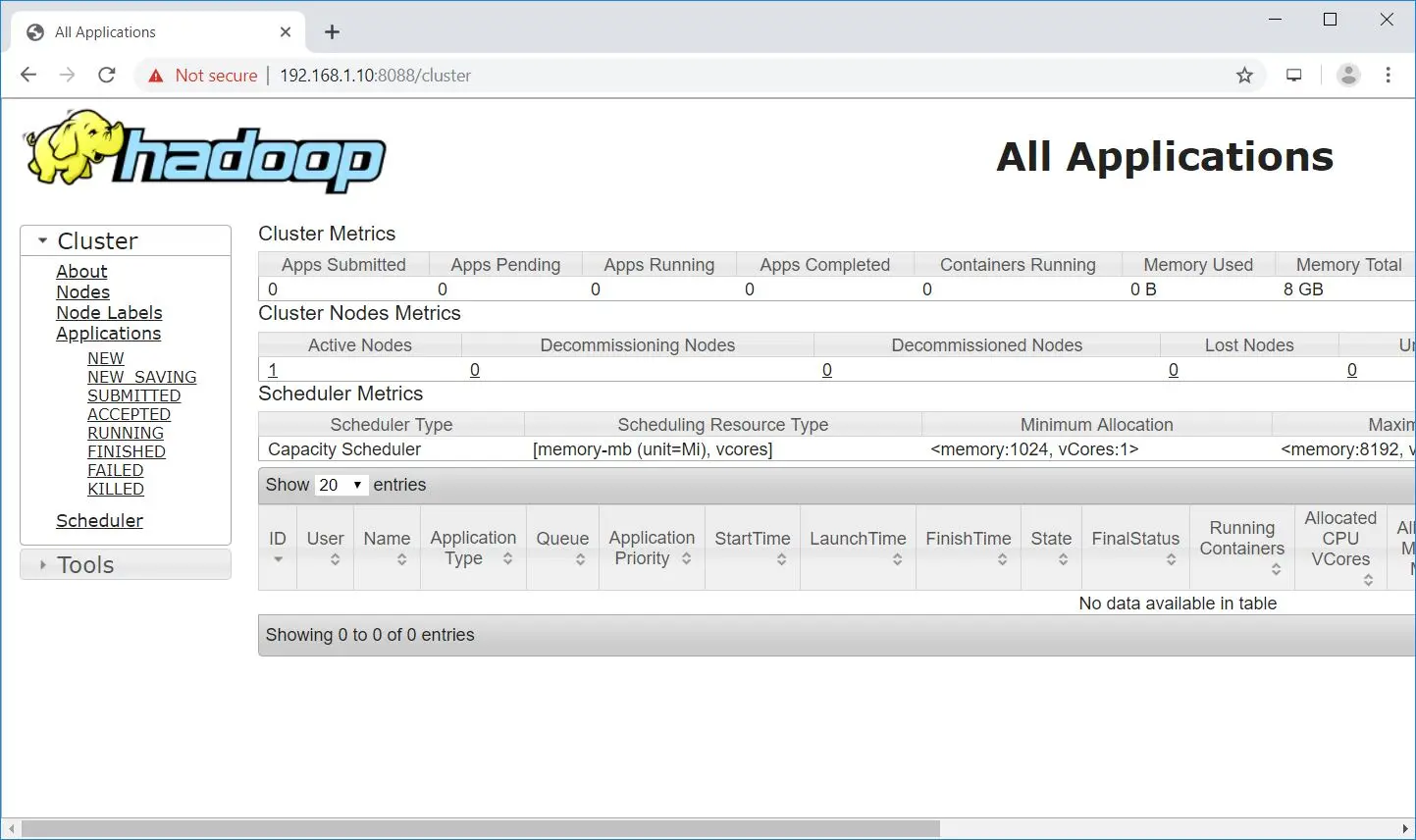

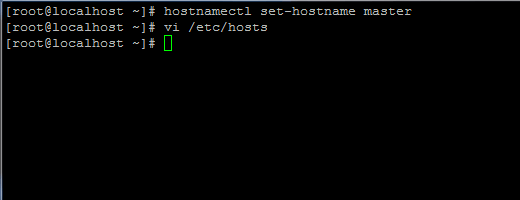

All the commands shown below can be scripted for automation purposes. In this article we will go through the process of building, testing, patching and running a minimal working Hadoop cluster from the Apache Hadoop source code. This got us thinking: How hard is it to build and run Hadoop from source in 2020? Hadoop is now a decade old project with thousands of commits, dozens of dependencies and a complex architecture. #APACHE HADOOP INSTALLATION ON CENTOS SOFTWARE#Some of these organizations are important contributors of the Apache Hadoop project and their clients rely on them to get a secure, tested and stable Hadoop (and other software of the Big Data ecosystem) builds. This version is now automatically picked while provisioning an Azure HDInsight cluster. Note: Since the publication of this article, Microsoft announced that they developed a fork of HDP 3.1.6.2 called “HDInsight-4.1.0.26”. MapR has been acquired by HP and IBM BigInsights has been discontinued. The two leaders, Cloudera and Hortonworks, have merged: HDP is no more and CDH is now CDP. Commercial Apache Hadoop distributions have come and gone.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed